Privacy and Ethics in Data Processing for Artificial Intelligence Applications

Understanding the Implications of Data Processing in AI

As technology continues to permeate daily life, the actions we take online produce vast quantities of data. This digital footprint is not only a resource for businesses but also a potential treasure trove for malicious actors. Data collection has become a focal point of concern, raising an essential question: who collects this data, and for what reasons? Companies often gather information for various purposes, from enhancing user experience to targeted advertising. However, the line between beneficial use and intrusive monitoring is increasingly blurred.

Another concern that warrants attention is the issue of consent. Many users may not fully grasp how their personal information is utilized. For instance, consider mobile applications that request access to one’s location, contacts, and other sensitive data. Often, users may unknowingly agree to extensive permissions without a clear understanding of the implications. This ambiguity complicates the trust relationship between consumers and service providers, highlighting the need for transparency in AI systems.

Data security is another critical aspect. High-profile breaches, such as those experienced by Equifax and Target, underscore the vulnerabilities in data handling processes. These incidents not only compromise personal information but can also lead to identity theft, financial loss, and lasting psychological impacts on individuals. Organizations must prioritize robust security measures, yet achieving this while maintaining efficiency remains a challenge.

Moreover, the question of bias in AI algorithms is increasingly prominent. Algorithms, often seen as impartial tools, can inadvertently perpetuate existing societal inequalities if not developed with care. For example, a facial recognition system may misidentify individuals based on ethnicity, leading to wrongful accusations or discrimination. This potential for bias speaks to a broader ethical dilemma: how do we ensure fairness in automated decision-making?

The California Consumer Privacy Act (CCPA) represents strides made toward addressing these concerns. The legislation reflects a growing societal demand for accountability and user empowerment regarding personal data usage. By requiring transparency and granting individuals rights over their information, such regulations aim to balance innovation with ethical considerations. Despite this, the pace of technological advancement often exceeds regulatory growth, posing unique hurdles for policymakers.

In conclusion, the intricate dance of privacy and ethics in AI demands thoughtful scrutiny. Navigating the challenges of data processing requires a multifaceted approach that encompasses legal frameworks, technological advancements, and ethical standards. As AI continues to evolve, so too must our understanding and responses to these pressing issues, ensuring that we harness its capabilities responsibly while safeguarding individual rights and societal values.

DISCOVER MORE: Click here to learn about machine learning in cybersecurity

The Complex Landscape of Data Ownership and Usage

In today’s data-driven world, the question of whether individuals truly own their personal data is increasingly contentious. The concept of data ownership is fraught with complexity, particularly as organizations derive immense value from consumer information. User-generated data – ranging from social media activity to shopping habits – can be monetized in ways consumers seldom realize, often leading to the spine-chilling notion that personal privacy is a commodity for sale.

According to a report from the World Economic Forum, the global data economy could be worth over $3 trillion by 2025. This dramatic valuation illustrates the stakes surrounding data processing and the inherent need for stringent ethical frameworks. Consumers may find themselves merely as data points amid a vast river of information flowing into the hands of corporations, heightening their vulnerability to manipulative practices.

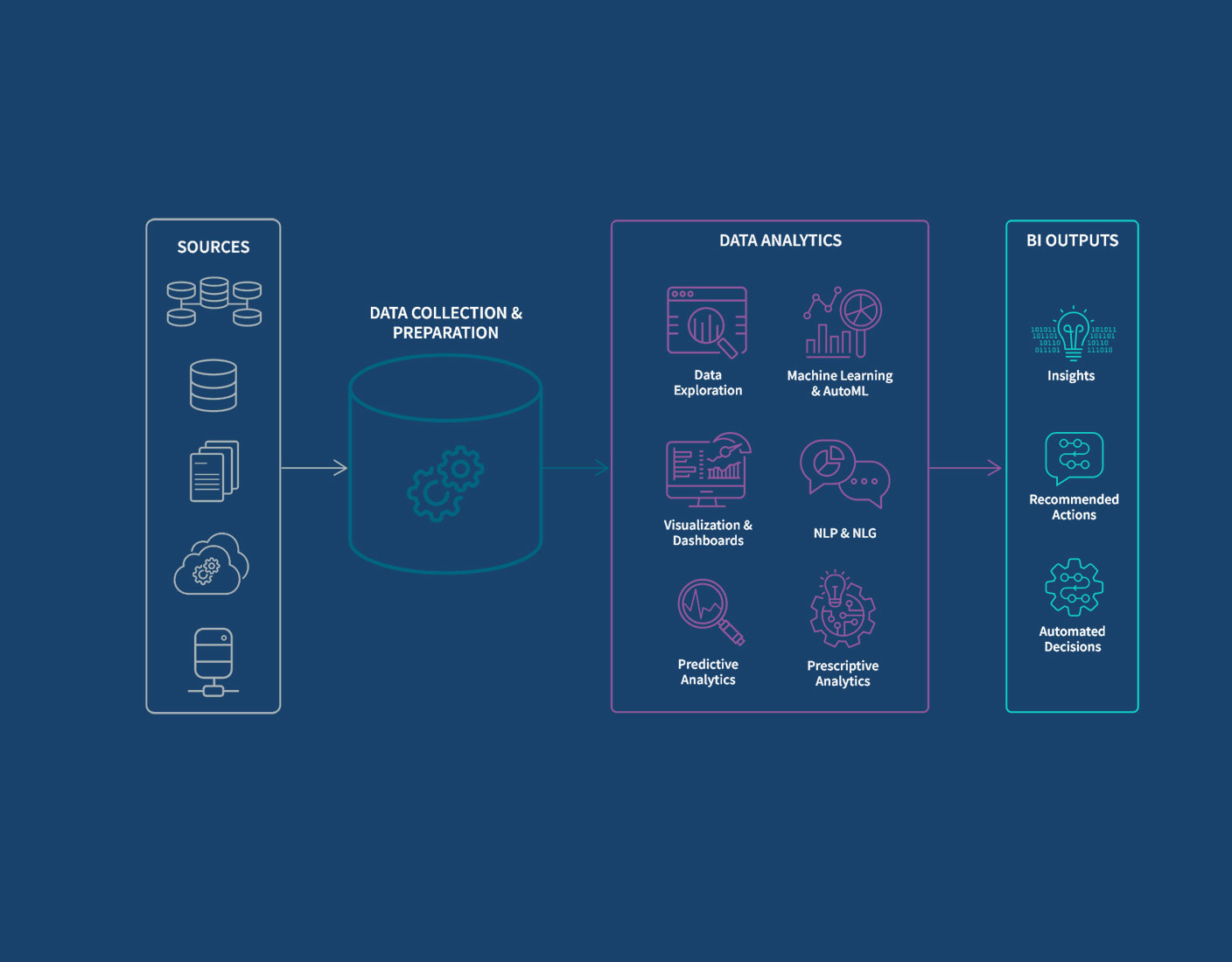

To navigate this landscape, users need to grasp what data is collected, who is collecting it, and the specific purposes for which it is being utilized. Below are some prevalent types of data that organizations may gather:

- Personal Identifiable Information (PII): This includes names, addresses, Social Security numbers, and other identifying details.

- Behavioral Data: Tracking how users interact with services, such as page views, time spent on a site, and click-through rates.

- Demographic Data: Age, gender, income level, and other characteristics that help organizations segment their target markets.

- Transactional Data: Information about purchases, service subscriptions, and interactions that signify consumer preferences.

Understanding the scope of this collection is essential for consumers to make informed decisions. However, the lack of clarity in privacy policies often leaves individuals unaware of how their information is being used. A survey by the Pew Research Center found that only 9% of Americans feel they have a lot of control over the data collected about them, reflecting a general unease around data management practices.

Compounding this issue is the challenge of achieving ethical data processing. As organizations race to innovate, they may overlook the moral implications of their algorithms. AI systems can inadvertently reinforce existing biases, as seen in sectors like finance and law enforcement, where algorithmic decision-making can yield disproportionate outcomes against minority groups. Addressing these ethical dilemmas is not just about compliance but also about fostering trust and fairness in the AI landscape.

In light of these factors, striking a balance between harnessing data’s potential and protecting individual rights is crucial. As developments in AI continue to unfold, policymakers and technologists must collaborate to form robust guidelines that not only safeguard privacy but also instill ethical mindfulness in data processing practices.

| Advantage | Explanation |

|---|---|

| Enhanced User Trust | Transparent data processing practices increase user confidence in AI applications. Ensuring ethical handling of personal information proves a commitment to user privacy. |

| Compliance with Regulations | Following privacy regulations such as GDPR safeguards against legal issues and enhances brand credibility, promoting a responsible AI ecosystem. |

| Data Minimization | Collecting only essential data reduces the risk of breaches and misuse, demonstrating a proactive approach to privacy. |

| Ethical AI Development | Incorporating ethics into AI development leads to fair and unbiased algorithms, reflecting societal values and fostering innovation that respects user rights. |

In the evolving landscape of artificial intelligence, addressing privacy and ethical considerations is critical. As organizations increasingly rely on data-driven approaches, they must earn and maintain trust through transparency and ethical standards. Embracing these advantages not only attracts users but also positions businesses responsibly within the tech ecosystem.The demand for compliance with global regulations reflects an essential shift towards ethics in AI. By adopting data minimization strategies and ethical development practices, organizations can mitigate risks and ensure fair treatment of individuals. These proactive measures not only create a safer digital environment but also enhance innovation pathways that align with societal values. Thus, understanding the intersection of privacy and data ethics is key to thriving in the future of AI applications.

DIVE DEEPER: Click here to learn about ethics and transparency in machine learning

Regulating AI: The Quest for Ethical Standards

The rapid evolution of artificial intelligence has sparked a demanding conversation regarding the need for ethical standards and regulations governing data processing. Governments and organizations across the globe are grappling with how to legislate AI technologies that increasingly permeate everyday life. Notably, the European Union has taken a significant step with its General Data Protection Regulation (GDPR), a robust legal framework designed to empower consumers and impose strict guidelines on how personal data can be collected, processed, and stored.

In the United States, however, the regulatory landscape is less defined. Various states have begun implementing their own privacy laws, such as the California Consumer Privacy Act (CCPA), which grants consumers rights to access and delete their personal information. Nevertheless, the absence of a comprehensive federal data privacy law leaves many consumers vulnerable to exploitation in the data-driven marketplace. The interplay between state and federal regulations creates inconsistencies that undermine the trust consumers place in businesses handling their data.

As public awareness of data processing practices grows, organizations face increasing pressure to demonstrate accountability in their AI applications. Missteps in ethical data usage can have dire consequences, as seen in high-profile breaches and scandals, such as the Facebook-Cambridge Analytica incident, where the misuse of user data led to a substantial erosion of public trust. This underscores the necessity for companies to move beyond mere compliance and genuinely prioritize ethical considerations in their data practices.

Moreover, the discourse around ethical AI has brought forth the concept of algorithmic transparency. As artificial intelligence systems influence significant decisions, it becomes critical to understand how algorithms arrive at their conclusions. Initiatives such as the AI Now Institute advocate for making AI processes more transparent, allowing stakeholders to scrutinize the underlying data and algorithms for potential biases. This transparency can foster greater accountability and drive innovation toward more equitable outcomes.

In addition to transparency, diversity in AI development teams is also crucial for ethical data processing. Research shows that diverse teams are more likely to identify and address potential risks associated with biased data, ultimately leading to fairer AI systems. Companies that aspire to influence social behavior must invest in creating inclusive conditions that represent a wide array of perspectives and experiences—ultimately benefitting their organizational ethics and product accuracy.

The role of education cannot be overstated in this context. As the demand for AI expertise surges, it is critical to incorporate ethical training and data literacy into educational curriculums. By arming future AI developers, data scientists, and policymakers with ethical frameworks and an understanding of privacy issues, society can take strides toward a data landscape that emphasizes integrity and respect for individual rights.

Overall, the pathway to achieving ethical data processing in AI applications is complex and multifaceted. Collaboration among policymakers, technologists, and the public is imperative as we forge ahead in harnessing the transformative potential of data while remaining steadfast in our commitment to privacy and ethical standards.

DISCOVER MORE: Click here to delve into the world of generative neural networks!

Conclusion: Navigating the Ethical Landscape of AI Data Processing

The intersection of privacy and ethics in data processing for artificial intelligence applications demands urgent attention as AI technologies continue to shape modern society. The existing regulatory frameworks in the United States, while progressing at the state level with measures like the California Consumer Privacy Act (CCPA), highlight the need for a comprehensive federal approach to safeguard consumer rights uniformly. Without such a framework, disparities remain that can lead to significant vulnerabilities and mistrust.

Ethical considerations must transcend mere compliance; businesses must embed them into the fabric of their AI development processes. The Facebook-Cambridge Analytica scandal serves as a cautionary tale, reminding stakeholders of the repercussions of negligence in ethical data usage. Promoting algorithmic transparency and fostering diversity within development teams are essential steps to mitigate biases and cultivate accountability, ensuring AI applications benefit all segments of society.

Moreover, integrating ethical training and data literacy into educational institutions prepares future leaders to navigate this complex landscape. By equipping the next generation of AI developers and policymakers with a strong ethical foundation, we pave the way for a more responsible data ecosystem.

In conclusion, advancing the ethical processing of data in AI applications is a collaborative endeavor that requires engagement from governments, businesses, and consumers alike. As we look ahead, prioritizing privacy and ethical standards will not only enhance public trust but also ensure that technology remains a force for good, ultimately leading to a more equitable and just digital future.