The Importance of Data Quality in Efficient Processing for Artificial Intelligence

The Imperative of Data Quality in AI

In today’s landscape of artificial intelligence, where capabilities evolve at an astonishing pace, the significance of data quality cannot be overstated. Organizations across various sectors, from healthcare to finance, are waking up to the reality that the effectiveness of AI-driven endeavors is intricately linked to the quality of the data fed into these systems.

Understanding Data Quality

Data quality encompasses several critical dimensions, each contributing to the reliable functioning of AI systems:

- Data Accuracy: Inaccurate data can lead to erroneous conclusions and poor decision-making. For instance, if an AI tool incorrectly identifies a benign tumor as malignant due to misleading data, it could result in unnecessary treatments, emotional distress, and significant healthcare costs.

- Data Completeness: A dataset that is missing vital information can lead to oversights that undermine AI applications. Consider self-driving cars; lacking comprehensive mapping data can inhibit their ability to navigate safely, posing risks to passengers and pedestrians alike.

- Data Consistency: Inconsistent data can generate chaotic outputs and false predictions. For example, if an AI model used for credit scoring is trained on datasets with conflicting information, it might erroneously classify a responsible borrower as high-risk, hampering their ability to secure loans.

The Broader Implications of Poor Data Quality

The repercussions of inadequately governed data systems are profound and far-reaching. In the realm of healthcare, AI models tasked with diagnosing diseases or predicting patient outcomes rely heavily on high-quality, comprehensive datasets. A recent study highlighted that an AI algorithm trained on substandard data produced diagnosis inaccuracies that could negatively impact patient care.

In financial predictions, reliance on flawed datasets may lead to misguided investment strategies, potentially resulting in significant financial losses. Imagine an AI advising an investment firm to allocate funds based on outdated economic indicators. Such scenarios underline the necessity for organizations to prioritize effective data governance frameworks to ensure the integrity of their data.

Ethical Considerations and Accountability

Exploring the importance of maintaining robust data quality also unveils a discussion around ethics and accountability in AI applications. With the potential for AI to influence everyday life—be it through healthcare decisions or the allocation of jobs—ensuring data integrity becomes not just a technical requirement but a moral obligation. A lack of accountability can lead to societal inequalities and misuse of AI systems, further amplifying the need for strict data management practices.

Investing in data quality is not merely a technical adjustment; it is a strategic imperative for the future of intelligent systems. As organizations recognize that it is not just about having data, but having the right data, the focus shifts towards fostering a culture that embraces data integrity. The journey into exploring data quality and its implications can lead readers to invaluable insights into the evolving relationship between technology, ethics, and society.

LEARN MORE: Click here to dive deeper

Data Quality: The Cornerstone of AI Effectiveness

As organizations embark on the journey of integrating artificial intelligence into their operations, the spotlight increasingly shines on the quality of data they collect and utilize. But what precisely constitutes high-quality data, and why is it vital for the effective processing of AI? Understanding this fundamental relationship can illuminate the path towards more reliable, efficient, and ethical AI solutions.

The Dimensions of Data Quality

At the heart of data quality lie several interconnected dimensions that determine its suitability for AI applications:

- Data Relevance: The data must pertain to the specific context of the AI task. Irrelevant data can skew results, leading to inappropriate conclusions. For example, AI systems designed for weather forecasting that are trained on unrelated economic data may present predictions that bear no connection to actual weather patterns.

- Data Timeliness: Data must be current; outdated information can lead to misinformed decisions. In sectors like finance, relying on historical data to make predictions about the future market trends can result in lost opportunities and financial setbacks. Fresh insights are crucial to staying competitive and agile in a rapidly changing landscape.

- Data Validity: It’s essential that the data used is gathered using appropriate methods. AI models trained on datasets generated by erroneous methods or biased sampling may yield misleading results. For example, using survey data collected from a non-representative sample can lead to an AI model that inaccurately predicts consumer preferences, affecting marketing strategies adversely.

Case Studies: Consequences of Poor Data Quality

The implications of poor data quality are stark and often alarming. One notable example involved a major healthcare provider that integrated an AI system to assist in risk assessment for patients. Due to reliance on historical health data riddled with inaccuracies, the AI tool misidentified patients at high risk for complications, ultimately leading to insufficient care for those who truly needed it. Such instances underscore how critical data quality is in enhancing patient outcomes and operational efficiency.

Similarly, in the realm of financial services, a reputed investment firm adopted an AI system to curate their trading strategies, only to discover that the system was using datasets marred by inaccuracies and bias. This not only hampered their investment success but also resulted in reputational damage when clients realized that flawed AI had impacted their portfolios significantly. Such experiences illuminate the essential need for robust mechanisms that ensure the quality of data being harnessed.

The Path Toward Cultivating Data Quality

Recognizing the intrinsic benefits of high-quality data is only the first step. Organizations must actively cultivate a holistic approach to data governance that involves regular audits, comprehensive training, and the establishment of clear protocols for data collection and processing. By prioritizing data quality, businesses can navigate the complexities of AI, paving the way for models that deliver trustworthy insights and facilitate sound decision-making.

Moreover, the commitment to data quality not only enhances technical effectiveness but also contributes to building trust with stakeholders. As the public becomes increasingly aware of the capabilities and implications of AI, organizations must assure that their systems are built on a foundation of reliable data. Thus, investing in data governance frameworks is not a mere compliance measure; it is an evolving corporate strategy that has far-reaching impacts for the future of artificial intelligence and its role in society.

| Advantage | Description |

|---|---|

| Improved Decision-Making | Reliable data ensures that AI systems make informed decisions based on accurate inputs. |

| Enhanced Model Performance | High-quality data leads to better training outcomes, enhancing the AI model’s capability to predict and analyze effectively. |

| Cost Reduction | Eliminating poor quality data minimizes the amount of time and resources spent on error correction and reprocessing. |

| Increased Compliance | Quality data helps in adhering to regulations by ensuring accuracy and completeness, reducing compliance risks. |

The relationship between data quality and AI processing efficiency cannot be overstated. Poor data quality can lead to erroneous outputs that might misguide decision-makers. Furthermore, good data practices foster an environment where the AI systems can operate at their best, allowing organizations to leverage their data strategically.In light of these points, investing in data management processes emerges as a priority for organizations that utilize artificial intelligence. As the demand for AI continues to grow, establishing a strong foundation of data quality practices will be essential for organizations aiming to capitalize on the full potential of AI technologies.

DON’T MISS: Click here to learn about integrating AI with RPA

The Implications of Data Quality on AI Performance

A critical examination of data quality reveals its profound influence on the performance of artificial intelligence systems. Poor data quality can lead to catastrophic outcomes, not just in terms of model accuracy, but also in operational efficiency and organizational credibility. Companies that neglect data quality may find themselves entangled in a web of unexpected challenges.

The Ripple Effect of Inaccurate Data

When organizations fail to prioritize accurate data, the repercussions extend far beyond immediate errors in AI predictions. For example, a leading autonomous vehicle company invested heavily in AI technologies to improve safety and navigation systems. Unfortunately, they utilized geolocation data that was outdated and incorrect, leading to numerous near-misses on the roads. This incident not only compromised public safety but also significantly delayed their market entry, demonstrating how the ripple effect of false assumptions based on poor-quality data can become a costly affair.

Furthermore, in the retail industry, a national chain seeking to enhance customer experience through personalized marketing faced substantial setbacks due to data inaccuracies. Despite employing advanced AI algorithms to analyze consumer behavior, the insights derived from flawed data led to misaligned marketing strategies that alienated loyal customers, causing significant revenue loss. Such cases showcase how critical data quality must be woven into the fabric of AI strategy to drive long-term success.

The Financial Cost of Data-Related Errors

The financial implications of compromised data quality can be staggering. According to a report by the International Data Corporation, poor data quality costs U.S. businesses more than $3 trillion annually. This staggering figure reflects not just lost opportunities, but also the resources required to correct errors and manage the fallout. For AI, which is heavily reliant on data-driven insights, these costs can multiply exponentially, creating a burdensome financial strain on organizations unprepared to address data quality issues effectively.

Building Robust Data Infrastructure

To navigate these complex challenges, organizations must invest in durable data infrastructure that not only focuses on acquisition but also emphasizes continuous monitoring and cleansing of data. Employing data integration tools can ensure that diverse datasets not only meet accuracy standards but are also harmonized for effective AI processing. For instance, companies like IBM and Microsoft have launched comprehensive data quality solutions, enabling businesses to implement preprocessing steps that clean and validate data before it feeds into AI algorithms, thereby enhancing the reliability of resultant analyses.

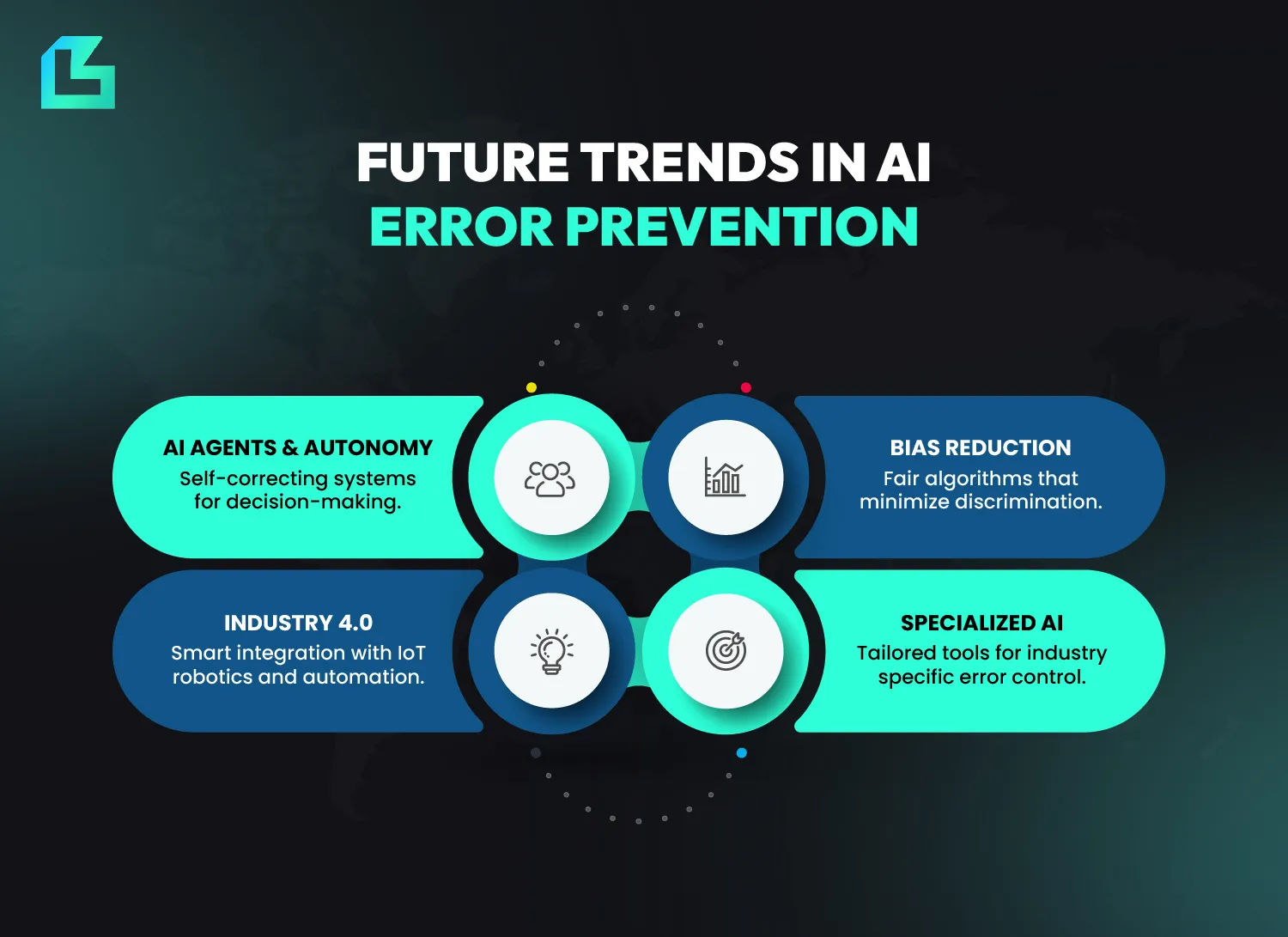

Additionally, machine learning itself can play a role in ensuring data quality. Advanced AI algorithms can continuously learn from new data and refine the systems in place, identifying anomalies and outliers that may compromise the integrity of the dataset. Such adaptive approaches not only foster a culture of quality management but also increase overall trust in AI solutions by providing an ongoing audit trail of data validity.

The Ethical Dimension of Data Quality

As awareness of AI’s societal impact grows, the ethical implications of data quality cannot be overlooked. Decisions made by AI systems trained on biased or incomplete data can perpetuate discrimination and inequality. An instance highlighted by multiple studies showed that facial recognition systems, when trained on datasets lacking diversity, often resulted in a higher incidence of misidentification among minority groups. This unsettling reality highlights the pressing need for data quality initiatives that ensure fairness and inclusivity in AI learning processes.

Therefore, adopting comprehensive data governance strategies is not merely a technical necessity; it is a moral imperative. Companies must strive to create datasets that reflect the diversity of real-world scenarios to not only achieve compliance but also foster an AI landscape that promotes equity and ethical interactions in society.

EXPLORE MORE: Click here to learn about machine learning for sustainability

Conclusion: The Imperative of Prioritizing Data Quality in AI

In the ever-evolving landscape of artificial intelligence, data quality emerges as a cornerstone of successful and efficient processing. As we’ve explored, the ramifications of neglecting this critical aspect can be devastating, impacting everything from individual safety to corporate reputations. High-quality data enables AI systems to function optimally, driving accurate predictions and actions while fostering operational efficiency.

Moreover, organizations must recognize that the financial burdens associated with poor data quality are downright staggering, with U.S. businesses losing over $3 trillion annually. This reality demands immediate and proactive measures. By investing in robust data infrastructure, employing sophisticated data integration tools, and adopting ongoing quality monitoring protocols, businesses can not only mitigate risks but can also unlock the full potential of AI technologies.

Further, it is critical for the ethical implications of data quality to be at the forefront of AI strategies. As AI systems increasingly influence societal norms and practices, it is essential that they are trained on comprehensive and diverse datasets. This commitment is not only a path to compliance but also one to a just and inclusive technological future.

As we advance into an age where artificial intelligence plays a pivotal role in decision-making, an unwavering focus on data quality will be paramount. Organizations that embed this principle into their core strategies will not only enhance their operational capacities but will also be better positioned to foster trust and equity in AI-driven solutions. Ultimately, the journey toward effective and responsible AI begins with a steadfast commitment to quality data.