The Role of Data Processing in Machine Learning Models: Challenges and Solutions

Understanding the Significance of Data Processing

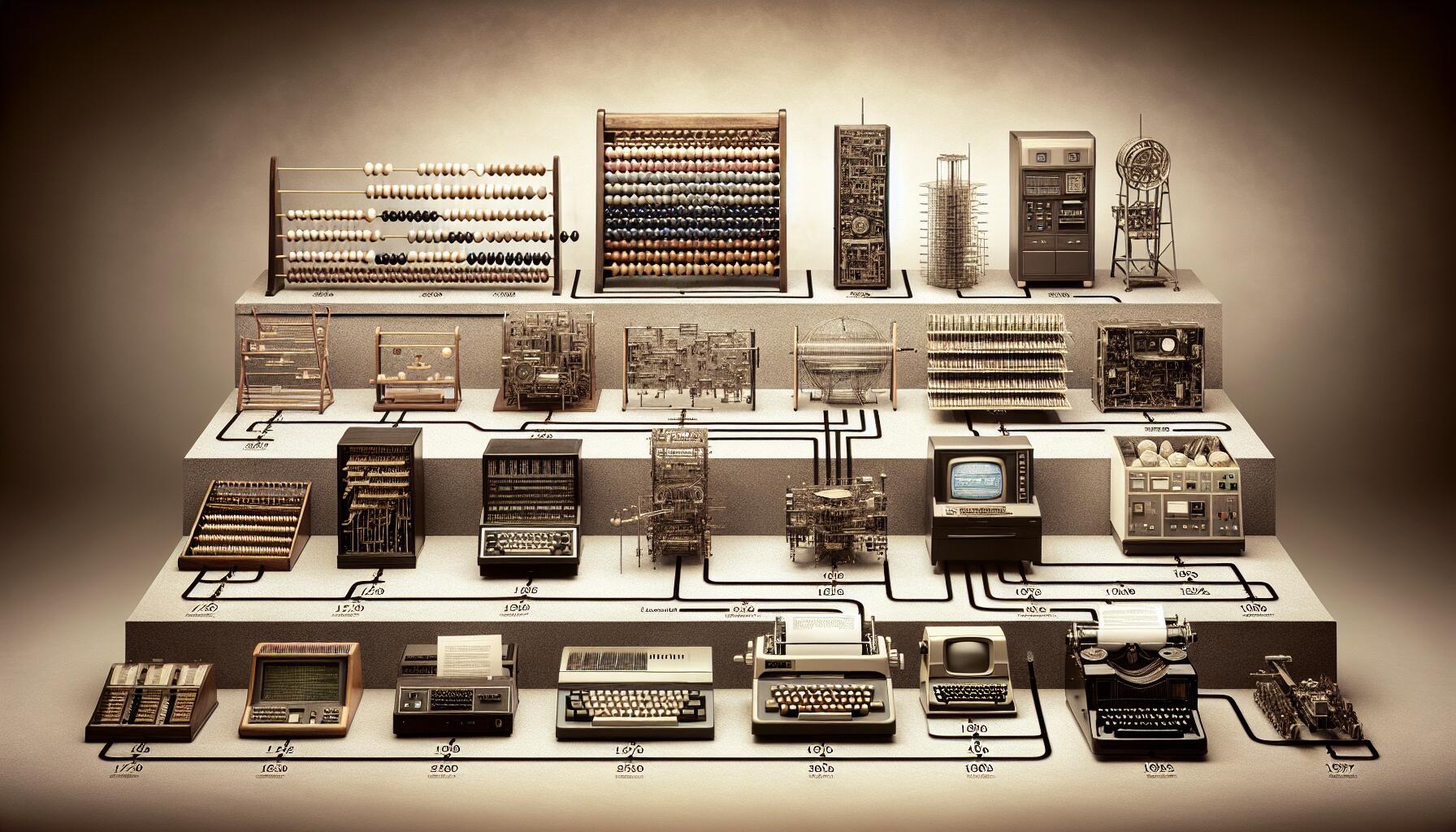

In the rapidly evolving landscape of technology, the role of machine learning models cannot be underestimated. These models are shaping how decisions are made in sectors such as healthcare, finance, and retail. However, the effectiveness of these models hinges on one crucial factor: data processing. This foundation is essential for transforming raw data into actionable insights. While advanced algorithms hold great promise, without appropriate data management, their potential can remain unrealized.

The importance of efficient data processing can be broken down into several key elements. First and foremost is data quality. For instance, in healthcare, if a predictive model is trained on incomplete patient records or erroneous lab results, it could yield skewed predictions that misinform treatment plans, potentially endangering lives. To avoid such scenarios, organizations are focusing increasingly on data cleansing techniques that help ensure accuracy and completeness.

Secondly, data volume presents a formidable challenge. In the United States alone, businesses generate massive data footprints daily—from customer interactions to operational metrics. Companies now face the daunting task of processing petabytes of information. Apache Spark, for example, offers technology designed to distribute data processing across multiple nodes, allowing for quicker analysis and real-time insights.

Another critical factor is data variety. Today’s datasets are often unstructured or come in various formats such as text, images, and videos. Each of these types may require unique processing techniques to be integrated effectively. For example, analyzing social media sentiment requires natural language processing to interpret textual data, while image recognition involves different methodologies entirely. The ability to harmonize diverse data sources is what drives effective machine learning.

Organizations looking to leverage big data also face notable challenges. One major concern is handling missing values. Strategies such as imputation methods or using algorithms that can operate on incomplete datasets can help mitigate their impact. Moreover, data privacy concerns are paramount, especially with regulations like the General Data Protection Regulation (GDPR) and the California Consumer Privacy Act (CCPA) in place. To navigate this landscape, companies must adopt robust data governance frameworks to safeguard sensitive information.

Finally, the integration of diverse data sources is yet another hurdle. Whether combining data from customer relationship management (CRM) systems with external market research or IoT sensors, organizations need to develop streamlined approaches to merge this often disparate data efficiently. The rise of data lakes has become a popular solution, allowing organizations to store vast amounts of unstructured data, making it accessible for analysis when needed.

This article aims to illuminate the intricate dance between data processing and machine learning models. By addressing the challenges outlined and engaging with innovative solutions, organizations can enhance their model performance significantly. With a firm grasp of these dynamics, they are better positioned to harness the power of their data, unlocking new avenues for growth and opportunities. As industries continue to innovate, understanding data processing will remain vital for success.

DIVE DEEPER: Click here to learn more

The Challenges of Data Quality in Machine Learning

One of the most pressing issues in data processing for machine learning models is ensuring data quality. Poor data quality can lead to disastrous outcomes, making the distinction between an effective solution and a potential failure collapse. This situation is particularly evident in sectors like banking, where incorrect data can result in fraud detection systems that falsely flag legitimate transactions or, conversely, fail to identify fraudulent activities. A study by the IBM Data Quality team highlights that approximately 30% of organizational data is estimated to be inaccurate. Such inaccuracies can be detrimental, affecting both operational efficiency and customer satisfaction.

To combat these data quality issues, organizations are increasingly employing a range of data cleansing techniques. These methods typically encompass the following:

- Validation: Implementing checks to ensure data meets predefined criteria.

- Deduplication: Removing duplicate entries to prevent inflation of datasets.

- Normalization: Standardizing data to enable more effective comparisons and analyses.

- Enrichment: Augmenting existing datasets with additional relevant information.

Another significant challenge is the management of data volume. As businesses continue to accumulate data at an unprecedented rate, the sheer size of these datasets necessitates innovative processing methods. The advent of cloud-based solutions has revolutionized this aspect of data processing. Platforms such as Amazon Web Services (AWS) and Google Cloud offer scalable storage and computational power, enabling organizations to handle large volumes of data efficiently. Additionally, organizations can leverage distributed computing frameworks like Apache Hadoop to break down massive datasets into manageable portions, allowing for quicker analysis.

Data variety also complicates the data processing landscape. Today, organizations encounter a wealth of unstructured data formats, including images, videos, and streaming information from various digital touchpoints. For instance, in the context of e-commerce, businesses analyze vast amounts of user-generated content from reviews and social media. Employing techniques such as machine learning-based text analytics and computer vision allows companies to derive insights from these disparate sources. The integration of these methodologies not only enhances model performance but also helps in creating a more holistic view of customer behavior.

Moreover, addressing missing values within datasets remains a critical concern. Omitting incomplete data can lead to biased models, while simply filling gaps with averages often misrepresents the actual dataset. Alternative strategies, such as using machine learning algorithms designed to accommodate missing data, are gaining traction in many organizations. These include methods such as K-Nearest Neighbors (KNN) and Random Forests, which can cleverly predict missing entries based on available information.

As industries embrace the digital transformation, the emphasis on robust data processing practices cannot be overstated. Ensuring data quality, managing volume and variety, and tackling missing values are just the starting points. By refining these elements of data processing, organizations can significantly enhance the accuracy and reliability of their machine learning models, ultimately driving better decision-making and fostering greater innovation.

The Challenges in Data Processing for Machine Learning

Data processing is a fundamental step in developing effective machine learning models. One primary challenge is the issue of data quality. Inaccurate, incomplete, or biased data can lead to models that misinterpret input, thereby producing unreliable outputs. Moreover, the datasets used for training must represent the real-world scenario adequately; otherwise, models may not generalize well to new, unseen data.Another obstacle is data volume. With an ever-increasing amount of data generated, managing and processing it efficiently is crucial. Big data can overwhelm systems and slow down processing times, leading to challenges in real-time analytics. Techniques like sampling and feature extraction are employed to mitigate this issue, yet they come with their own set of risks.Additionally, data privacy concerns pose challenges in data processing. Compliance with regulations like GDPR means that organizations must process data responsibly, ensuring that sensitive information is either anonymized or removed entirely. Balancing effective data usage while maintaining user privacy requires advanced strategies and technologies.Advancements in data processing algorithms and tools continue to evolve, aiming to confront these challenges head-on. Innovations in distributed computing, such as Apache Spark, enable handling larger datasets more efficiently, while techniques like data augmentation can improve model robustness against bias. Keeping abreast of these advancements is critical for organizations aiming to excel in machine learning applications.As the field progresses, ongoing research aims to address these challenges, making data processing not just a hurdle, but a stepping stone towards more accurate and reliable machine learning models.

DISCOVER MORE: Click here to dive into the importance of ethics and transparency in machine learning.

Enhancing Data Processing Techniques: Innovations and Best Practices

As organizations strive to leverage machine learning models effectively, their focus is increasingly shifting towards enhancing data processing techniques. With advancements in technology, several innovative solutions have emerged to tackle the data-related challenges previously discussed. These enhancements are crucial for improving model performance and ensuring reliable outcomes.

One notable innovation in the realm of data processing is the application of automated data profiling. This process involves using algorithms that can assess the structure, completeness, and consistency of data across diverse sources. Tools equipped with these capabilities can analyze datasets rapidly, providing valuable insights into potential risks and quality issues prior to the training of machine learning models. Organizations like Talend and Informatica are already utilizing automated profiling to streamline their data processing efforts significantly.

In addition to automated data profiling, the incorporation of data lakes is revolutionizing the way businesses manage vast datasets. Data lakes enable the storage of structured and unstructured data in its raw form, serving as a centralized repository from which organizations can transform the data as needed. This flexibility allows data scientists to experiment with different types of analysis, ultimately leading to richer insights and more accurate models. Companies like Netflix have successfully harnessed data lakes to analyze user behavior and optimize content recommendations.

The Role of Data Annotation in Supervised Learning

Furthermore, as the demand for supervised learning increases, so does the importance of data annotation. Properly labeled data is critical for training accurate machine learning models, especially in fields such as healthcare and automotive industries where precision is paramount. Innovative platforms such as Amazon SageMaker and Labelbox offer intuitive tools that support scalable data annotation processes. By leveraging a combination of human annotators and machine learning techniques, these platforms ensure high-quality labels, thereby enhancing model performance.

Despite the significant strides made in data processing, organizations still face challenges related to real-time data processing. In fast-moving sectors like finance or online retail, making timely decisions based on the latest data can be crucial. Technologies such as stream processing frameworks—including Apache Kafka and Apache Flink—enable organizations to analyze data continuously as it flows into their systems. This capability opens the door for actionable insights that can inform immediate business strategies, thus fostering a competitive edge.

Ethical Considerations and Data Privacy

However, with these advancements come critical ethical considerations and the need for robust data privacy practices. As organizations collect and process more sensitive information, compliance with regulations such as the General Data Protection Regulation (GDPR) and the California Consumer Privacy Act (CCPA) has become non-negotiable. Companies are increasingly incorporating privacy-preserving data processing techniques, such as federated learning and differential privacy, to ensure responsible use of data while still achieving the model accuracy that businesses require.

Furthermore, organizations are now investing in data governance frameworks that outline how data is collected, managed, and used. These frameworks emphasize accountability, transparency, and ethical use of data, ensuring that machine learning models are not only effective but also ethically sound and reliable.

In conclusion, the ongoing evolution of data processing techniques presents both significant opportunities and challenges for machine learning implementations across various sectors. As organizations continue to refine their approaches to data quality, volume, variety, and processing methods, the potential for innovation and enhanced decision-making grows exponentially.

EXPLORE MORE: Click here to learn about advanced data techniques

Conclusion: Navigating the Complexity of Data Processing in Machine Learning

In summary, the role of data processing is undeniably crucial in the development and execution of machine learning models. As organizations grapple with an ever-increasing influx of data, they encounter various challenges, from ensuring data quality to navigating ethical concerns. However, the innovations seen in data processing—from automated profiling and data lakes to advanced data annotation techniques—offer promising solutions to these obstacles.

Furthermore, the emphasis on real-time data processing provides organizations with the agility needed to harness data effectively and make informed decisions quickly, which is essential in competitive sectors like finance and technology. The adoption of privacy-preserving techniques reflects a growing awareness of the importance of ethical data collection and usage, ensuring compliance with regulations like GDPR and CCPA while maintaining the integrity of machine learning models.

As organizations continue to explore new methodologies and frameworks in data governance, they not only enhance the performance of their machine learning initiatives but also build trust with their users. The future promises a landscape where data processing is seamless, effective, and aligned with ethical standards, paving the way for more robust machine learning solutions.

Ultimately, embracing these advancements while remaining vigilant about potential pitfalls will be key for businesses aiming to thrive in a data-driven world. The integration of enhanced data processing techniques will empower companies to extract deeper insights, drive innovation, and realize the true potential of machine learning.