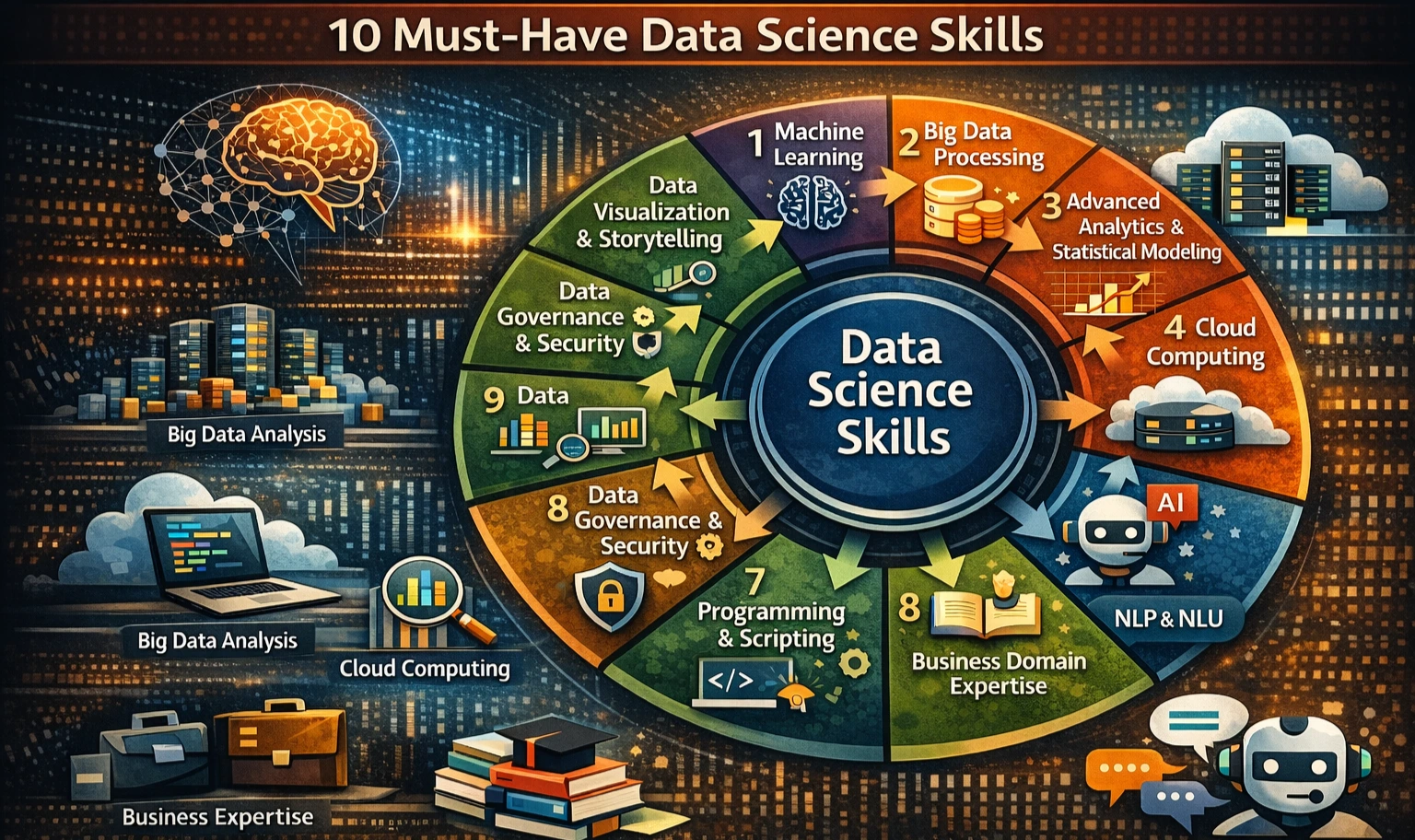

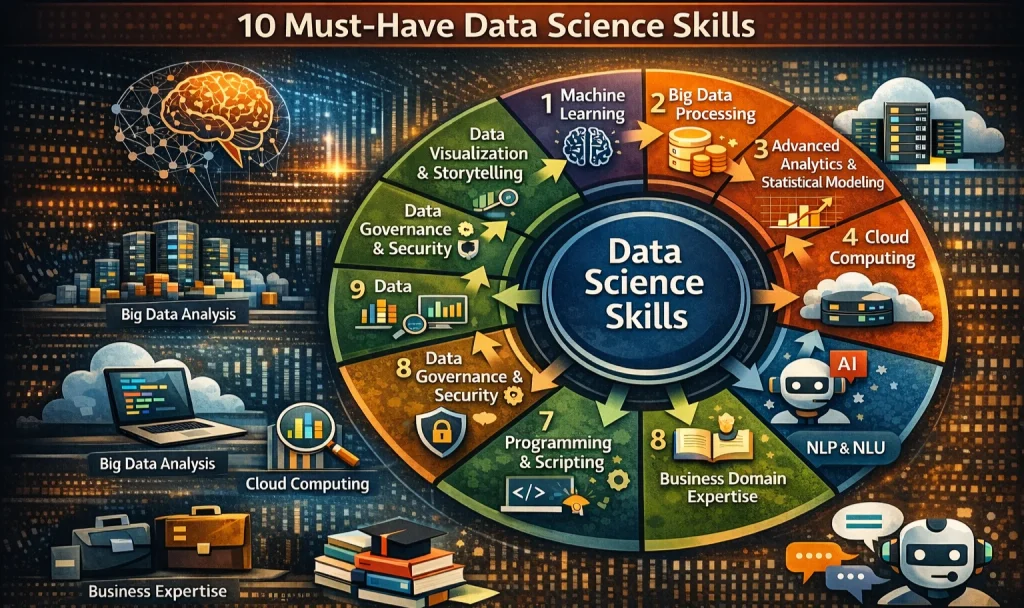

The Digital Frontier of Data Processing

In today’s information-driven landscape, organizations are confronted with the monumental task of sifting through an overwhelming volume of data. This data, often referred to as the “new oil,” holds the potential to illuminate pathways to innovation, enhanced customer experiences, and competitive edge. However, the sheer volume and complexity demand that businesses adopt advanced data processing techniques to effectively navigate this digital frontier.

Why Advanced Data Processing Matters

The significance of investing in advanced data processing methods cannot be overstated. As the volume and variety of data continue to escalate, traditional methods often fall short. Here are three compelling reasons organizations should prioritize these cutting-edge techniques:

- Efficiency: Advanced processing techniques streamline data workflows, significantly cutting down the time and computational power necessary to derive insights. For instance, machine learning models that once required hours of processing can now deliver results in minutes, allowing teams to pivot strategies quickly.

- Scalability: With the growth of data—from customer interactions to Internet of Things (IoT) devices—scalable systems can effortlessly adapt to increased volumes. Businesses can implement cloud computing solutions that expand as needs grow, ensuring that data infrastructure keeps pace with organizational demand.

- Improved Accuracy: The precision of predictive models hinges on the quality of input data. By employing sophisticated data handling methodologies, organizations can mitigate biases and inaccuracies, resulting in models that reflect real-world nuances and deliver reliable forecasts. This is critical in industries such as healthcare, where accurate predictions can lead to better patient outcomes.

Examples of Techniques

Numerous innovative methods are enhancing data processing, each empowering organizations to glean insights more effectively:

- Data Normalization: This standardization process ensures that datasets are consistent, unraveling confusion that may arise from variations in units or formats. For example, normalizing sales data from multiple regions allows a company to generate accurate performance reports across its numerous market segments.

- Feature Engineering: This technique involves identifying and creating relevant variables that can impact the performance of machine learning models. By thoughtfully choosing features, businesses can substantially enhance the explanatory power of their models; for instance, using customer demographics alongside purchasing behaviors to refine marketing efforts.

- Dimensionality Reduction: As datasets grow richer, they often become complex, making analysis cumbersome. Techniques like Principal Component Analysis (PCA) help researchers condense datasets intelligently, maintaining essential information while simplifying the model. This not only speeds up processing time but also fosters a clearer understanding of underlying trends.

By embracing these advanced techniques, organizations are equipped to unearth opportunities and carve out a distinct advantage in the fiercely competitive marketplace. As you delve deeper into these methodologies, consider how they might transform your own strategies and pave the way for unprecedented growth and innovation.

DIVE DEEPER: Click here to learn more

Leveraging Innovative Data Transformation Methods

As organizations strive to harness the power of their data, the implementation of advanced data processing techniques becomes indispensable. These techniques not only facilitate the extraction of valuable insights but also enhance the capabilities of machine learning models. In this section, we will explore several prominent methods that are reshaping the data landscape, providing a foundation for more robust machine learning applications.

Data Transformation Techniques to Enhance Performance

Data transformation is a critical step in preparing raw data for analysis and modeling. By employing methodologies that appropriately manipulate and refine data, businesses can improve the overall performance of machine learning algorithms. Here are some vital data transformation techniques:

- Data Cleaning: The initial step in transforming data involves identifying and correcting errors, inconsistencies, and inaccuracies within datasets. For instance, a large financial institution may discover duplicated customer entries in its database due to data entry errors. By correcting these inaccuracies, the organization not only enhances data reliability but also significantly reduces the risk of making misguided decisions based on faulty data. This step is crucial as clean data directly correlates with the accuracy of insights generated by machine learning models, ultimately improving the organization’s operational efficiency and customer satisfaction.

- Data Augmentation: This technique is particularly powerful in fields such as computer vision and natural language processing. Data augmentation involves generating new synthetic data points by artificially altering existing data while preserving its core attributes. For example, in image recognition tasks, operations such as flipping, rotating, and adding noise to images can effectively increase the size of the training dataset without the need for additional labeled images. This can be especially beneficial for small businesses that may lack sufficient data to train robust models, allowing them to compete with larger entities in the technology landscape.

- Time-Series Analysis: In industries relying heavily on time-dependent data, such as finance, retail, or healthcare, time-series analysis is crucial. This technique allows organizations to recognize patterns over time, utilizing methods such as seasonal decomposition and autocorrelation to inform model development. For example, a retailer analyzing past sales data can use historical trends to accurately forecast future inventory needs, enabling more effective stock management and reducing waste. This analytical capability can lead to a significant increase in profitability, particularly during seasonal peaks.

- Encoding Categorical Variables: Machine learning algorithms typically require numeric input, making the transformation of categorical data essential. Techniques such as one-hot encoding and label encoding convert non-numerical categories into a format suitable for analysis. For instance, consider a marketing firm analyzing consumer preferences across different demographics. By transforming categorical variables like gender or location into numerical representations, the firm can maintain valuable insights and leverage them to tailor marketing strategies that resonate more effectively with target audiences.

By integrating these advanced data transformation techniques into their operations, organizations can significantly enhance the performance of their machine learning initiatives. As the data ecosystem continues to evolve, the capability to adeptly process and refine data will empower businesses to stay competitive and lead within their sectors. Consequently, adopting innovative data transformation practices not only positions organizations favorably in the market, but it also fosters a culture of data-driven decision-making that drives sustainable growth and innovation.

Discover the Advantages of Advanced Data Processing Techniques

As the machine learning landscape evolves, advanced data processing techniques become increasingly essential. These methodologies significantly enhance the efficiency and effectiveness of machine learning models. In this section, we will explore two noteworthy advantages of employing these techniques, making a case for their relevance in today’s data-driven world.

| Category | Key Features |

|---|---|

| Data Quality Enhancement | By utilizing advanced techniques, the accuracy of data cleaning and preprocessing is dramatically increased, ensuring that your model is built on reliable data. |

| Scalability | Advanced data processing allows organizations to manage and analyze larger datasets seamlessly, empowering machine learning models to operate efficiently at scale. |

These enhancements not only improve model accuracy but also optimize computational resources, providing a competitive edge in predictive analytics. Implementing such techniques is critical as businesses strive to harness the full potential of their data, making the adoption of advanced data processing techniques a strategic priority.

DISCOVER MORE: Click here to explore the future of machine learning in medical diagnosis

Innovative Feature Engineering Strategies

In the realm of machine learning, the significance of feature engineering cannot be overstated. This process involves selecting, modifying, or creating new input features derived from existing data to enhance model performance. Effective feature engineering can lead to dramatic improvements in the predictive power of machine learning algorithms. In this segment, we will delve into various feature engineering techniques that are advancing the capabilities of data processing within machine learning environments.

Essential Techniques for Feature Enrichment

Feature engineering encompasses a vast array of methodologies that cater to the unique requirements of different datasets and modeling tasks. Here are some of the most impactful techniques:

- Polynomial Features: Generating polynomial features from existing numeric variables can help capture non-linear relationships that a model may otherwise overlook. For instance, if one is working with housing price predictions, creating variables that represent the square or cube of features such as square footage or number of bedrooms can provide deeper insight into the pricing structure. By leveraging polynomial features, organizations can unveil underlying patterns that contribute to more accurate predictions.

- Dimensionality Reduction: As data increasingly grows in complexity and volume, high-dimensional datasets can hinder model performance due to the curse of dimensionality. Techniques such as Principal Component Analysis (PCA) or t-distributed Stochastic Neighbor Embedding (t-SNE) allow data scientists to reduce the dimensionality of datasets while retaining essential information. For example, using PCA on a vast array of customer transaction data can help organizations visualize customer segments in a two-dimensional space, thereby simplifying the analysis and interpretation of consumer behavior trends.

- Interaction Features: Sometimes, the interaction between two or more features can carry significant explanatory power. This involves creating new features that represent combinations of existing features. For example, in predicting customer churn, combining features like tenure with service usage can expose trends where long-term customers using specific services show a higher tendency to leave. Not only does this approach enrich the dataset, but it also leads to more nuanced models capable of capturing complex dependencies.

- Text Feature Extraction: With the explosion of unstructured data, especially in forms such as customer reviews or social media posts, transforming text data into usable features has become vital. Utilizing methods such as Term Frequency-Inverse Document Frequency (TF-IDF) or word embeddings (like Word2Vec) enables the conversion of text into numerical representations that preserve semantic meaning. For example, an e-commerce platform analyzing product reviews can utilize TF-IDF to uncover prevalent themes and sentiments that drive customer purchasing decisions.

- Time Series Feature Creation: In scenarios where data is collected over time, generating features that capture temporal patterns is essential. Features such as rolling averages, moving medians, or lagged values can deliver insights into trends and seasonality. For instance, a bank monitoring transaction data over monthly intervals may create lagged features that highlight consumer behavior changes during holiday seasons, enhancing fraud detection models.

By embracing these innovative feature engineering strategies, organizations can significantly bolster their machine learning applications, creating models that not only perform better but also provide deeper insights into the data they process. The integration of robust feature engineering practices is integral to staying ahead in the rapidly evolving tech landscape, where data-driven decisions are paramount in achieving business goals and operational excellence.

DIVE DEEPER: Click here to learn more about the future of machine learning

Conclusion: The Future of Machine Learning through Advanced Data Processing

In the dynamic world of machine learning, the ability to process and interpret data effectively is becoming increasingly critical. Advanced data processing techniques serve as the backbone of successful machine learning initiatives, offering methodologies that not only enhance model performance but also unlock new avenues for insights and innovations. From innovative feature engineering strategies to powerful dimensionality reduction techniques, the capabilities at researchers’ and data scientists’ fingertips are expanding rapidly.

As highlighted in our discussion, employing methods such as generating polynomial features, creating interaction features, and leveraging text feature extraction ultimately leads to a more nuanced understanding of complex datasets. Moreover, the effective application of these techniques can give organizations a competitive edge, allowing them to harness the full potential of their data and make informed, data-driven decisions. For instance, companies in the United States are already using these advanced techniques to enhance customer experiences, optimize supply chains, and forecast market trends.

However, it is essential to recognize that the landscape of machine learning is continually evolving. Keeping abreast of emerging techniques and tools is vital for practitioners eager to stay relevant in the industry. By fostering a culture of experimentation and continual learning, organizations can ensure they remain at the forefront of innovation. Ultimately, embracing advanced data processing techniques not only paves the way for improved models but also drives substantial business value in an increasingly data-centric world. As we look to the future, the transformative potential of machine learning will undoubtedly hinge on our ability to refine and adapt these sophisticated data processing techniques.