A Complex Landscape of AI Understanding

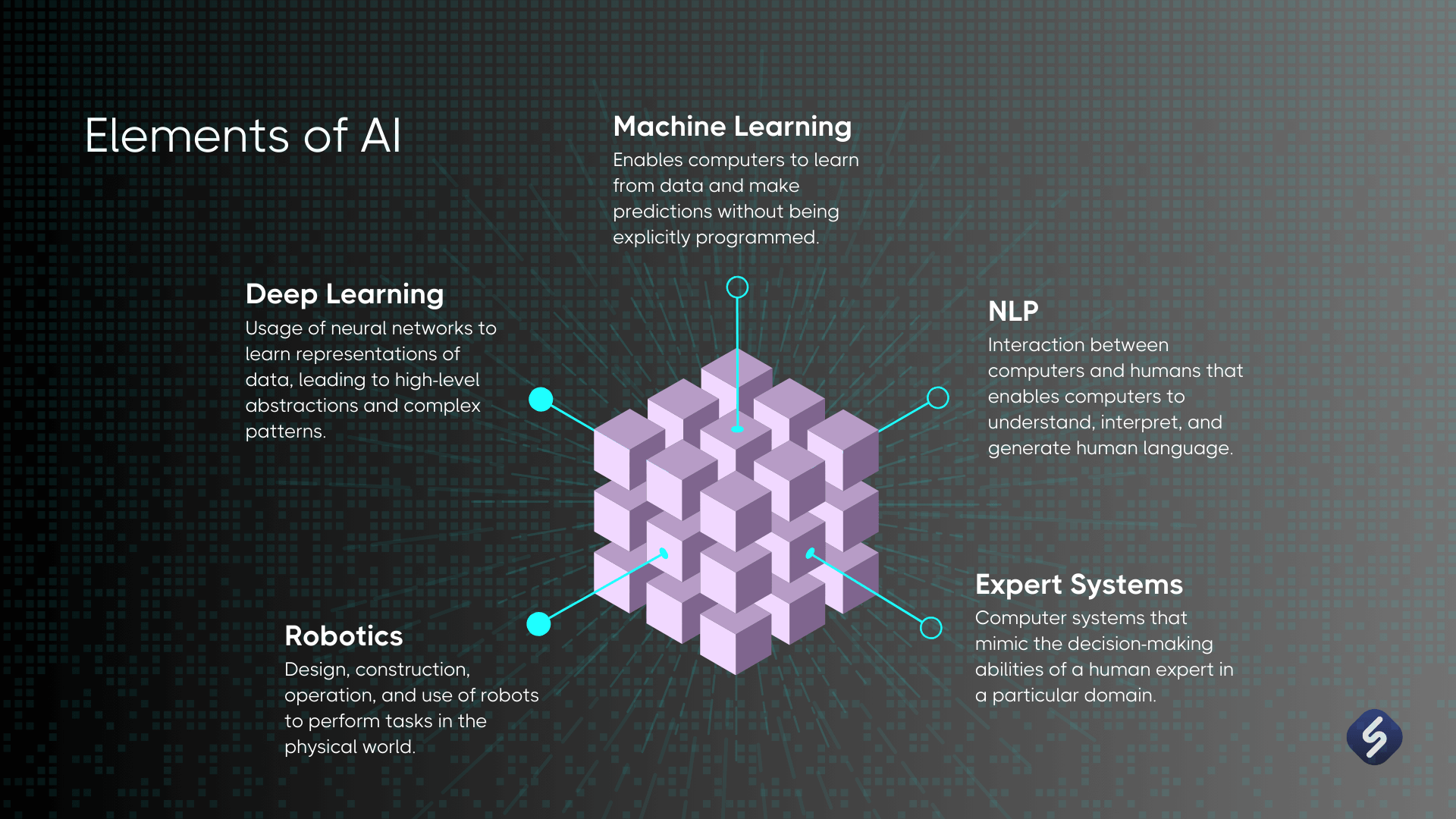

The rapid advancement of artificial intelligence (AI) technology has brought about significant changes across various fields, including healthcare, finance, and even entertainment. However, one of the most pressing challenges remains understanding how these sophisticated systems arrive at their conclusions. Central to this quest for clarity are neural networks, which are often likened to a black box; they possess incredible processing power yet obscure reasoning processes that even their developers can struggle to decipher.

Understanding AI decision-making is increasingly vital for several reasons:

- Accountability: In domains such as healthcare, where AI can determine treatment plans, or in finance, where it can impact loan approvals, knowing the reasoning behind AI decisions is essential. For instance, if an AI system denies a healthcare claim based on radiology imaging results, understanding how that decision was reached could help healthcare providers appeal the decision or ensure patients receive necessary care.

- Bias Detection: AI systems are not immune to biases present in training data, which can lead to unfair outcomes. For example, facial recognition technology has faced criticism for misidentifying individuals from diverse backgrounds. By understanding how neural networks function, developers can identify and address these biases, enhancing fairness in AI applications.

- Enhanced Trust: As AI continues to integrate into everyday life, from virtual assistants to autonomous vehicles, establishing a transparent decision-making process is crucial. With clear explanations of AI decisions, users are more likely to trust these technologies, which is vital for their successful adoption and utilization.

To demystify the workings of neural networks, researchers have developed various interpretive methods, such as:

- Feature Attribution: This approach involves pinpointing which inputs, or features, significantly influence the outputs of AI models. For example, in a credit scoring AI, determining whether income level or payment history carried more weight in a loan application can provide insights into decision-making processes.

- Visualization Techniques: Graphical tools that illustrate how neural networks process inputs can also be invaluable. Heat maps or layer activations can showcase how different neurons within a network respond to various stimuli, offering a glimpse into the complex interactions that underpin decision-making.

- Model-Agnostic Approaches: Techniques such as LIME (Local Interpretable Model-Agnostic Explanations) are designed to be applicable across a variety of AI systems, enhancing accessibility and understanding regardless of the underlying model.

As we continue to explore these groundbreaking tools and techniques, it becomes clear that understanding neural networks is not merely an academic exercise but a crucial endeavor. By unveiling the intricacies of AI decision-making, we can foster a more informed society, empower users, and build AI systems that are not only intelligent but also ethical and trustworthy. This journey into the heart of AI’s reasoning promises not only to enlighten a diverse audience but also to spark further inquiry into the technologies that are profoundly shaping our world.

DISCOVER MORE: Click here for deeper insights

Decoding the Neural Network Black Box

As artificial intelligence (AI) systems increasingly permeate various sectors, the successful interpretation of neural networks has never been more paramount. These systems mimic the human brain’s interconnected neuron structure, enabling them to process vast amounts of data and make complex decisions. However, the trade-off for this unprecedented capability is often a lack of transparency. The appeal of AI lies in its efficiency, but when decisions made by these systems affect real lives, the demand for clarity intensifies. So, how can stakeholders grasp the underlying mechanics of these neural networks?

One effective strategy involves understanding key concepts within the architecture of neural networks. At its core, a neural network is composed of layers that include an input layer, one or more hidden layers, and an output layer. Each layer consists of neurons, which are the processing units collecting and interpreting data based on weight adjustments influenced by learning algorithms. The interplay between these layers shapes the network’s ability to solve problems, revealing intricate pathways of decision-making.

To better visualize how decisions are formed, forward propagation and backpropagation are crucial processes to explore:

- Forward Propagation: During this phase, input data travels through the network, passing through each layer. Each neuron applies an activation function, determining whether to pass information to the next layer. This continuous flow ultimately culminates in the output that the model generates, be it a classification, prediction, or recommendation.

- Backpropagation: After forward propagation, the network evaluates the accuracy of its predictions. If discrepancies exist between the predicted and actual outcomes, backpropagation adjusts the weights within the network to minimize these errors. This optimization process is essential for improving the model’s performance and enhancing its decision-making capabilities.

Beyond understanding these foundational processes, several innovative methods have emerged to elucidate the black box nature of neural networks:

- SHAP (SHapley Additive exPlanations): This powerful technique assigns importance values to each feature based on its contribution to the prediction. By revealing feature interactions and their impacts on outcomes, SHAP provides a comprehensive view of model decisions.

- Layer-wise Relevance Propagation (LRP): LRP decomposes the output of the neural network to assign credit to each neuron contributing to specific predictions. This helps in visualizing how different features influence the final decision.

- Saliency Maps: These graphical representations highlight the areas in input data that most influenced the network’s decisions. They are especially useful in image recognition tasks, where pinpointing key visual elements can clarify model behavior.

The push towards understanding AI decision-making is not merely an academic pursuit; it holds critical implications for accountability and fairness in AI deployment. As we delve deeper into these interpretive methods, it becomes evident that fostering a transparent AI ecosystem will empower developers, users, and regulatory bodies alike. By bridging the gap between complex algorithms and human comprehension, we can cultivate a more informed society prepared to embrace AI’s transformative potential—one decision at a time.

| Category | Description |

|---|---|

| Transparency | With neural network interpretation, users gain insights into the decision-making process of AI. |

| Accountability | Understanding how models arrive at conclusions enhances responsibility and trust in AI systems. |

| Enhanced Learning | Interpretation strategies such as feature visualization assist in improving and optimizing AI models. |

| Bias Detection | Identifying biases in training data enhances model fairness and improves user confidence. |

| User Engagement | Providing explainable AI fosters greater user interaction and collaboration with technology. |

Understanding neural networks offers profound implications for AI decisions, making them more transparent, accountable, and justifiable. Transparency plays a crucial role as it allows users to trace how AI models arrive at specific outcomes. This transparency is essential for fostering trust among users and stakeholders alike. As AI continues to impact various domains, the ability to explain decisions made by these systems leads not only to increased accountability but also to crucial learnings that can enhance the models themselves. For instance, employing techniques like feature visualization can reveal what drives a model’s predictions. This naturally leads to enhanced models, robust against biases that can compromise their effectiveness. Robustness is further aided by tools that help users identify and rectify biases found in training datasets, thereby maintaining the integrity of AI-driven outcomes. Furthermore, explainable AI promotes deeper user engagement, as individuals may feel more comfortable using technology they understand. This level of user involvement is valuable in developing AI systems that genuinely meet human needs and ethical standards.

DISCOVER MORE: Click here to dive deeper

Enhancing Transparency Through Visualization Techniques

As the demand for transparency in artificial intelligence grows, researchers and practitioners are continuously exploring novel ways to interpret and understand neural networks. While explaining the inner workings of these models is complex, visualization techniques have emerged as powerful tools for demystifying AI decisions. By transforming abstract numerical values into interpretive graphics or diagrams, these approaches create avenues for stakeholders to engage with and comprehend the decision-making processes of neural networks.

One of the most popular visualization techniques is known as Grad-CAM (Gradient-weighted Class Activation Mapping). This method provides insights into which regions of input data (such as images) are most influential in a model’s predictions. By highlighting specific areas based on gradient information, Grad-CAM allows users to see how neural networks focus on different features when coming to a decision. For instance, in the medical field, Grad-CAM can help radiologists understand which parts of an X-ray prompted a diagnosis, potentially improving patient care and outcomes.

Another effective technique is the t-SNE (t-distributed Stochastic Neighbor Embedding). This method specializes in the visual representation of high-dimensional data by compressing it into a two- or three-dimensional scatter plot. When applied to neural network outputs, t-SNE can reveal potential clustering patterns, helping to identify groups of similar inputs and how the model perceives them. This granularity is crucial for diagnosing model performance, understanding category boundaries, and detecting systemic biases within the network.

Furthermore, partial dependence plots (PDP) serve as essential instruments in interpreting the impact of specific features on predictions. By illustrating the relationship between a feature (or set of features) and predicted outcomes, PDPs enable users to visualize how adjustments in input variables can sway model decisions. This is particularly valuable for businesses in the finance sector, where refined insights into credit scoring models can lead to more equitable and fair decision-making processes.

To promote a broader understanding of AI ethics and accountability, many organizations are investing in explainable AI (XAI) frameworks. These frameworks employ a variety of techniques, including example-based explanations that highlight instances from training data similar to a specific prediction. For example, an AI model tasked with recognizing fraudulent transactions can help investigators by showcasing past incidents that share characteristics with new cases flagged for review. By grounding decisions in real-world examples, XAI contributes to building trust in AI systems.

Moreover, as neural networks drive advancements in fields such as autonomous vehicles, healthcare diagnostics, and financial analysis, the stakes for biased outcomes are high. Ensuring that stakeholders have access to interpretive tools and insights not only boosts operational efficacy but also cultivates public confidence in AI applications. Thus, building interpretability into the AI lifecycle—from development through deployment—is essential for fostering a responsible AI ecosystem.

As we continue to navigate the evolving landscape of artificial intelligence, prioritizing transparency and interpretability will enable a more profound understanding of how neural networks make decisions. As AI becomes increasingly intertwined with our everyday lives, understanding the algorithms that shape outcomes is not only a technical necessity but a societal imperative.

DIVE DEEPER: Click here to discover more

Conclusion

In summary, the journey towards understanding neural networks and their decision-making processes is more vital than ever in an age defined by rapid technological advancements. As artificial intelligence infiltrates critical sectors such as healthcare, finance, and autonomous systems, ensuring that its operations are transparent is essential for both ethical implications and practical applications. The utilization of visualization techniques, such as Grad-CAM, t-SNE, and partial dependence plots, equips stakeholders with the tools necessary to unlock the complexities of AI functionalities. By rendering abstract data into more digestible visual formats, these methods empower developers, regulators, and end-users to confront and comprehend the often enigmatic nature of neural networks.

Moreover, the commitment to explainable AI (XAI) frameworks not only promotes a culture of accountability but also serves as a bridge to cultivate public trust. In an era where independent verification of algorithmic fairness and accuracy is paramount, these frameworks illuminate the paths AI systems take in their decision-making processes, ensuring all parties involved can evaluate outcomes critically. As the landscape of AI continues to evolve, prioritizing interpretability will not just facilitate better technical performance but will fundamentally underscore the societal obligation to align AI advancements with human values.

Ultimately, understanding AI decisions transcends technical necessity; it is a societal imperative that mandates a collaborative approach between researchers, practitioners, and the public. As we strive for a future where AI systems operate fairly and transparently, continuous investment in interpretability research will be pivotal for realizing a trustworthy and responsible AI ecosystem.