A Glimpse into the Evolution of Neural Networks

The development of artificial intelligence has charted a course through numerous significant breakthroughs, with neural networks standing at the heart of this evolution. These systems have transformed from rudimentary models to intricate architectures capable of performing tasks that were once thought to be uniquely human. Understanding this trajectory requires a look at key milestones that have been pivotal in shaping modern AI. One of the earliest foundational stones laid in this journey was the introduction of the Perceptron in 1958. This simple linear classifier set the stage for future innovations and marked the inception of neural network research.

As we traverse through the timelines, the 1980s saw a rekindling of interest in neural networks largely attributed to the development of backpropagation algorithms. This method allowed for the efficient training of neural networks, offering a mechanism to minimize errors. A notable example from this period would be the Hinton et al. studies, where researchers showcased the potential of multi-layer neural networks, enhancing their capacity to learn complex patterns in data.

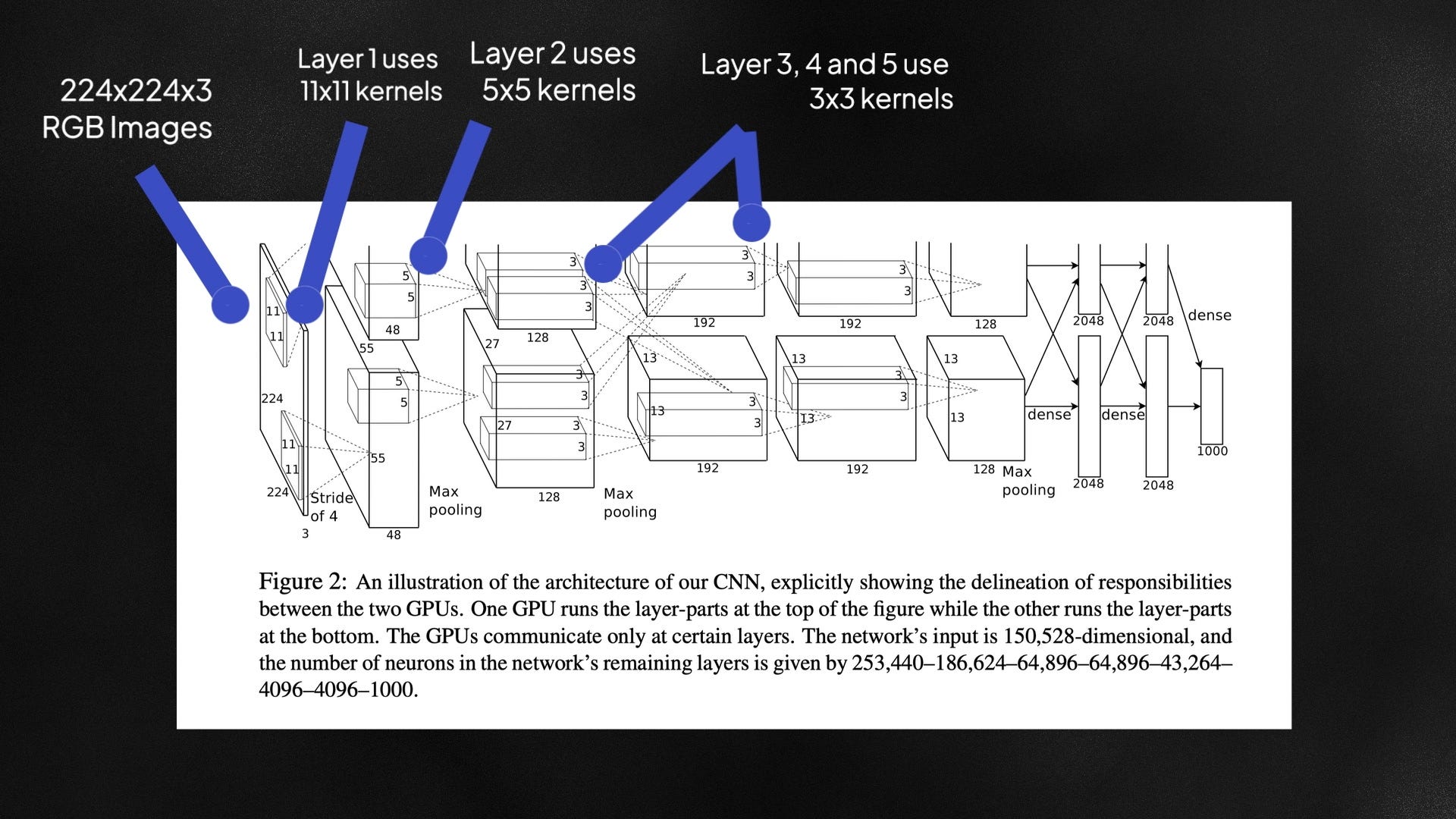

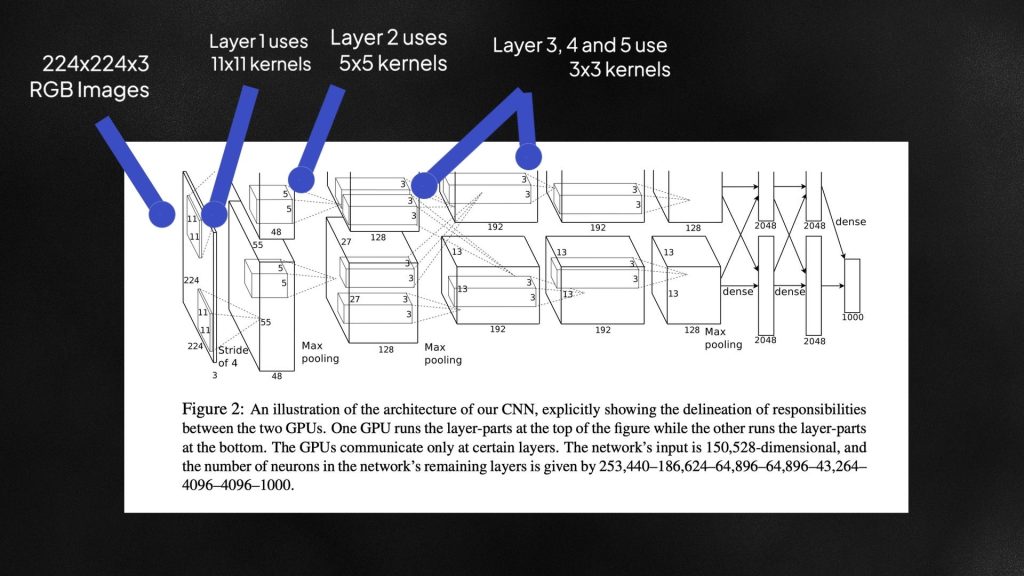

Fast forward to the 2010s, a decade that marked a watershed moment in AI. It was during this time that deep learning emerged as a dominant force, illustrated by the remarkable performance of Convolutional Neural Networks (CNNs). These networks achieved groundbreaking accuracy in image and speech recognition tasks. The 2012 ImageNet competition stands out, wherein a deep learning model outperformed traditional methods, effectively demonstrating the capabilities of neural networks. More importantly, such advancements have enabled applications like facial recognition in smartphones and voice-activated assistants, which have become ubiquitous in American households.

The journey of neural networks is not just about the algorithms; it encompasses a series of underlying principles that have evolved with time. One such principle is the move from layered structures of single-layer networks to the now ubiquitous multi-layered architectures. These intricate models have vastly improved their capability to analyze and interpret complex data.

Moreover, the ability to handle data has transformed dramatically. Neural networks initially focused on linear classifiers, while recent advancements enable them to process unstructured data sets like images and natural language, making them more versatile than ever.

Enhancements in hardware—specifically the use of Graphics Processing Units (GPUs)—have significantly boosted computational power, allowing researchers and engineers to train more complex models in less time. This leap in technology has accelerated the development cycle for AI applications across diverse industries, from healthcare to finance.

As we explore this captivating narrative of neural networks, it’s imperative to consider how each stride forward has enriched the robust frameworks in use today. The ongoing advancements not only carry implications for technological progression but also provoke curiosity about the untapped potential that lies ahead in the realm of neural networks. The future promises a frontier ripe for exploration, wherein AI could shape our daily lives in unprecedented ways.

DIVE DEEPER: Click here for more insights

From Perceptron to Backpropagation: A Defining Era

The journey of neural networks truly began with the Perceptron, developed by Frank Rosenblatt in the late 1950s. This early model was built on the principle of a single-layer network that could learn to classify inputs into two distinct categories. Although simple, the Perceptron introduced the concept of a neuron and the essential functions of activation and weight adjustment, laying down the groundwork for future developments. However, it quickly became evident that the Perceptron had limitations, particularly in handling non-linear problems.

As researchers grappled with these constraints, a significant turning point occurred in the 1980s. The reemergence of interest in neural networks was largely propelled by the discovery of the backpropagation algorithm. This method allowed for the efficient calculation of gradients across layers, enabling deeper networks to minimize errors effectively. The work of scholars such as Geoffrey Hinton, David Rumelhart, and Ronald J. Williams was instrumental in demonstrating that multi-layer networks could outperform their single-layer counterparts, thus setting off a wave of excitement and exploration in the field.

Central to this renaissance was the realization that neural networks could be trained to tackle increasingly complex tasks through the use of multiple layers, or hidden layers. The advantages of these multi-layered configurations included:

- Higher capacity to learn: More hidden layers meant a greater ability to understand and generalize based on input data.

- Non-linear transformations: Each layer applied a non-linear transformation, which allowed neural networks to understand intricate patterns far beyond simple linear decision boundaries.

- Robustness: The deeper the model, the more robust it became in terms of understanding data anomalies and noise.

In conjunction with backpropagation, the development of regularization techniques addressed issues like overfitting and boosted the network’s performance in real-world applications. Models could now be trained more effectively by leveraging techniques such as dropout and weight decay, which became staples in machine learning workflows.

This newfound potential attracted attention from various industries eager to leverage AI’s capabilities. Applications began sprouting up in fields such as finance, where neural networks modeled stock price movements, and in healthcare, where they were capable of diagnosing diseases from medical images. However, as enthusiasm grew, so did the need for sophisticated hardware capable of supporting these advanced models.

The integration of Graphics Processing Units (GPUs) in the training process marked another revolutionary shift. Initially designed for video gaming, GPUs became indispensable in accelerating the computational demands of deep learning. With their parallel processing capabilities, GPUs allowed researchers to handle large datasets and complex algorithms, fostering more rapid advancements in neural network architectures.

This fusion of powerful algorithms and enhanced technology laid a strong foundation for the remarkable advancements we are witnessing today. From the basic Perceptron to the intricate designs of contemporary deep learning models, the evolution of neural networks is a testament to the innovation that continues to redefine artificial intelligence.

The Evolution of Neural Networks: From Perceptron to Deep Architecture

The evolution of neural networks has been a fascinating journey that began with the humble Perceptron. Developed in the late 1950s, the Perceptron was the first algorithm to forecast outcomes based on input data features, laying the groundwork for modern artificial intelligence. However, its simplicity came with limitations, notably the inability to solve non-linear problems. This shortcoming led to the development of multilayer perceptrons (MLPs) in the 1980s, which introduced the concept of hidden layers to enhance computational capacity and tackle more complex datasets.

Fast forward to the 21st century, the advent of deep learning transformed the landscape of neural networks. As processing power increased and large datasets became available, deep architectures manifested as the backbone of image recognition, natural language processing, and numerous other applications. Innovations such as convolutional neural networks (CNNs) and recurrent neural networks (RNNs) built upon earlier models, enabling machines to achieve human-like accuracy.

| Category | Key Features |

|---|---|

| Perceptron | Single-layer neural network with linear activation function. |

| Deep Learning | Multi-layered architectures capable of capturing complex patterns. |

Today, neural networks leverage vast datasets and complex algorithms to achieve astounding performance in fields like computer vision and speech recognition. Understanding this evolution not only sheds light on the mechanisms of today’s systems but also provides insight into future advancements in artificial intelligence.

As the interplay between theory and application continues to evolve, the neural network landscape is ripe for exploration. Regular discussions in the field highlight how breakthroughs in deep learning are paving the way for truly intelligent systems.

LEARN MORE: Click here for insights on ethics in robotic process automation

The Dawn of Deep Learning: Unlocking the Power of Complex Architectures

The fervor surrounding the backpropagation algorithm and the introduction of multi-layered perceptions set the stage for the rise of deep learning. By the early 2000s, researchers began to explore more intricate architectures that would ultimately revolutionize the field of artificial intelligence.

This shift was significantly influenced by the advent of the deep belief network (DBN)</strong) and convolutional neural networks (CNNs). Pioneered by Geoffrey Hinton and his colleagues, DBNs brought forth a new method of unsupervised learning, allowing layers to extract features from data independently before fine-tuning through supervised learning. This functionality markedly improved the network’s efficiency in understanding and processing vast amounts of unstructured data.

At the same time, CNNs emerged as a pivotal force within the realm of image recognition. Their unique architecture—consisting of convolutional layers designed to capture spatial hierarchies—allowed for more nuanced interpretations of visual data. In 2012, a watershed moment occurred when a CNN developed by Alex Krizhevsky, Ilya Sutskever, and Geoffrey Hinton won the prestigious ImageNet competition by a staggering margin. This accomplishment not only underscored the prowess of CNNs in image classification but also reignited interest in neural networks and deep learning across multiple disciplines.

Furthermore, the evolution of recurrent neural networks (RNNs) and their derivatives, such as Long Short-Term Memory (LSTM) networks, heralded a breakthrough in processing sequential data—like time series and natural language. These architectures became crucial in applications ranging from language translation to sentiment analysis. RNNs’ ability to maintain and manage hidden state information across sequences allowed them to capture context more effectively than their predecessors.

As neural networks thrived, so did the growing need for large-scale datasets. The advent of the Internet and various data-generating devices sparked an explosion of available information. Instances like the creation of the ImageNet dataset provided researchers with millions of labeled images to train their models, leading to newfound accuracy levels in object recognition. With the rise of Big Data, the shackles of limited training data were broken, and deep learning’s potential surged.

The challenge of computational complexity remained, but advances in hardware continued to pave the way. Alongside GPU technology, the utilization of Tensor Processing Units (TPUs), specially designed for machine learning tasks, further accelerated the training of deep neural networks. These innovations shaped a potent synergy—the ability to iterate rapidly on deep architectures, leading to models that were increasingly sophisticated and capable of unprecedented tasks.

Notably, the application of neural networks expanded beyond just academic curiosity. Industries started capitalizing on their capabilities, from self-driving cars utilizing CNNs for object detection to financial institutions employing them for fraud detection—signaling a shift toward operational practices driven by advanced machine learning models.

The proliferation of frameworks such as TensorFlow, Keras, and PyTorch democratized access to deep learning methodologies. These tools simplified the implementation of complex architectures, allowing a broader audience to experiment with neural networks, fueling innovation from startups to established tech giants.

With these advancements, the concept of neural networks transformed from a niche area of research to a mainstream technological marvel, prompting experts to rethink the boundaries of artificial intelligence. As the story of neural networks continues to unfold, one thing is certain: the journey from the Perceptron to deep architectures has not only reshaped AI but has also laid the foundation for the next generation of intelligent systems.

DISCOVER MORE: Click here for in-depth insights

Conclusion: Tracing the Path of Neural Network Innovation

The journey from the Perceptron to today’s sophisticated deep architectures encapsulates a remarkable evolution in the field of artificial intelligence. This odyssey reflects humanity’s insatiable quest for knowledge and its relentless drive to push technological boundaries. The foundational concepts laid by early pioneers have blossomed into complex models such as deep belief networks, convolutional neural networks, and recurrent neural networks, each unlocking unprecedented capabilities across various domains.

The integration of Big Data and powerful computational resources, such as GPUs and TPUs, has significantly accelerated the development and implementation of neural networks. These advancements have empowered enterprises to harness data in transformative ways, facilitating breakthroughs in fields ranging from natural language processing to computer vision. As industries embrace these innovations, they reshape how we interact with technology daily—ushering in the era of intelligent systems.

Moreover, the democratization of neural network frameworks like TensorFlow, Keras, and PyTorch fosters a culture of exploration and experimentation. This accessibility invites developers, researchers, and enthusiasts from diverse backgrounds to contribute to a vibrant ecosystem of ideas, accelerating progress in AI.

As we look to the future, neural networks are poised to tackle even more complex challenges. By evolving beyond mere tools, they stand at the forefront of technological advancement, ready to redefine possibilities in our increasingly interconnected world. The evolution of neural networks not only symbolizes the ingenuity of human creativity but also paints a promising picture of a future enriched by intelligent machines. With every iteration, we get closer to realizing the full potential of artificial intelligence, paving the way for innovations that could reshape our lives forever.